The mandate for modern SaaS platforms is no longer just to organize human workflows, but to fundamentally execute the work itself. As product teams rush to embed generative AI into their applications—shifting from static tools to intelligent agents—they are colliding with a massive wall of infrastructure debt. Managing GPU clusters, queuing systems, model fallback logic, and complex asynchronous architectures is quickly turning SaaS providers into accidental cloud infrastructure companies. The solution lies in adopting unified API pipelines that handle orchestration, moderation, and execution entirely off-premises. By decoupling the intelligence layer from the infrastructure layer, product teams can ship robust, multi-step AI features faster, protect their gross margins, and avoid the devastating lock-in of rapidly depreciating foundational models.

From Static Workflow to Autonomous Work Product

For the past decade, Software-as-a-Service has been defined by user-driven data entry and workflow management. The application provided the canvas; the human provided the labor. That paradigm has definitively shattered. Customers now expect their software to generate the output autonomously. According to Sequoia Capital, we have entered an era where AI is shifting software from “service as software” to delivering the final work product directly.

Consider an e-commerce Product Information Management (PIM) platform. Three years ago, a PIM was simply a database for merchants to store SKUs, descriptions, and static studio photography. Today, an e-commerce platform that does not automatically generate localized marketing copy, remove messy backgrounds from vendor-supplied images, upscale low-resolution user uploads, and synthesize lifestyle product photography is rapidly becoming obsolete. The competitive moat is no longer just a slick user interface; it is the embedded intelligence that saves the end-user hours of manual labor.

However, building these capabilities into a multi-tenant SaaS architecture requires fundamentally different engineering muscles. Traditional web application development relies on deterministic databases and microservices. Generative AI introduces massive latency variability, probabilistic outputs, and compute-intensive workloads that break traditional synchronous request-response cycles. When product teams attempt to retrofit complex machine learning pipelines onto their existing web architecture, the resulting friction drastically slows down the product roadmap.

The GPU Trap and the Collapse of SaaS Margins

The most dangerous trap for a SaaS company transitioning to an AI-native model is attempting to build and manage its own inference infrastructure. Provisioning GPUs, managing container orchestration for massive foundation models, and handling the inevitable cold starts and node failures is a guaranteed path to margin destruction. As noted by Andreessen Horowitz, AI companies structurally exhibit lower gross margins—often hovering between 50% and 60%—compared to the 80% or higher margins enjoyed by traditional SaaS businesses, largely due to exorbitant cloud compute costs.

When a SaaS engineering team decides to self-host image generation models or build custom queuing infrastructure for long-running video generation tasks, they take on an immense operational burden. GPUs are expensive to rent and even more expensive to leave sitting idle during off-peak hours. Building a resilient retry mechanism to handle webhook failures, rate limits, and network timeouts requires dedicated platform engineers.

Furthermore, according to Gartner, by 2025, 30% of generative AI projects will be abandoned after the proof-of-concept phase, driven largely by escalating infrastructure costs and poor risk controls. For an e-commerce SaaS vendor or a digital agency CTO, managing CUDA versions and scaling inference nodes is a distraction from their core business: delivering value to merchants and brands. The strategic pivot is to treat AI infrastructure as a fully managed utility, relying on specialized platforms that abstract away the hardware layer completely.

Escaping Vendor Lock-In Through Unified Schemas

The pace of advancement in foundational models is unprecedented, rendering today’s state-of-the-art model obsolete in a matter of months. A SaaS product that hardcodes its backend to a specific provider’s API is accumulating a toxic form of technical debt. According to analysis by The Information, the half-life of a frontier AI model’s dominance is currently shrinking, with leapfrog releases from major players occurring almost quarterly.

If your e-commerce platform uses one provider for image generation, a different open-source model for background removal, a specialized API for upscaling, and yet another vendor for text OCR, your engineering team is forced to maintain a brittle web of disparate schemas, authentication methods, and rate-limiting behaviors. When a better model is released—say, ByteDance releases a superior video generation model that outperforms your current vendor—switching requires a complete rewrite of the integration layer.

This fragmentation is why forward-thinking architecture relies on unified API surfaces. By standardizing the integration layer, platforms can dynamically swap underlying models without touching their core application code. Solutions like apiai.me provide a single, consistent catalog of ready-to-call AI tools—spanning from DeepMind’s Nano Banana for fast image generation to Flux Fill Pro for inpainting—accessible via a unified schema. This allows a SaaS company to seamlessly upgrade to the latest models, route requests based on cost or availability, and maintain vendor independence, all while dramatically reducing the integration footprint within their own codebase.

Multi-Step Intelligence: Pipelines Over Prompts

Translating an AI capability from a novelty to an enterprise-grade SaaS feature requires moving beyond single, isolated API calls. Real-world business workflows are inherently multi-step. Generating a high-converting product image isn’t just about sending a text prompt to an image generator; it requires a complex orchestration of chained events.

Consider the workflow for automating a seller’s product listing on a marketplace: 1. Ingestion: The system receives a raw, low-quality photograph taken by a seller on a mobile phone. 2. Preprocessing: An AI model crops the image and removes the cluttered background. 3. Enhancement: An upscaling model increases the resolution and clarifies textures. 4. Generation: A generative model places the isolated product into a high-end lifestyle setting, applying appropriate lighting and shadows. 5. Moderation: The final image must be scanned to ensure it contains no unsafe, branded, or restricted content.

Attempting to orchestrate this sequence within a traditional backend requires maintaining complex state, handling failures at any node, and writing extensive boilerplate code to pass the output of one model as the input to the next. According to McKinsey & Company, realizing the economic potential of generative AI requires integrating models into end-to-end operational workflows, not just deploying standalone tools.

Pipeline architectures solve this orchestration problem natively. By utilizing server-side routing, a product team can design a sequence of AI operations that execute as a single atomic transaction from the perspective of their own application. The SaaS backend simply issues one request and receives the final, fully processed, and moderated asset, completely offloading the complexity of state management and multi-node failure recovery.

Quality Gates and Autonomous Moderation

In B2B SaaS, the cost of a hallucination or an inappropriate output is severe. User trust is the currency of SaaS, and deploying unpredictable AI models directly to end-users is a massive liability. Therefore, embedding intelligence requires embedding autonomous quality control.

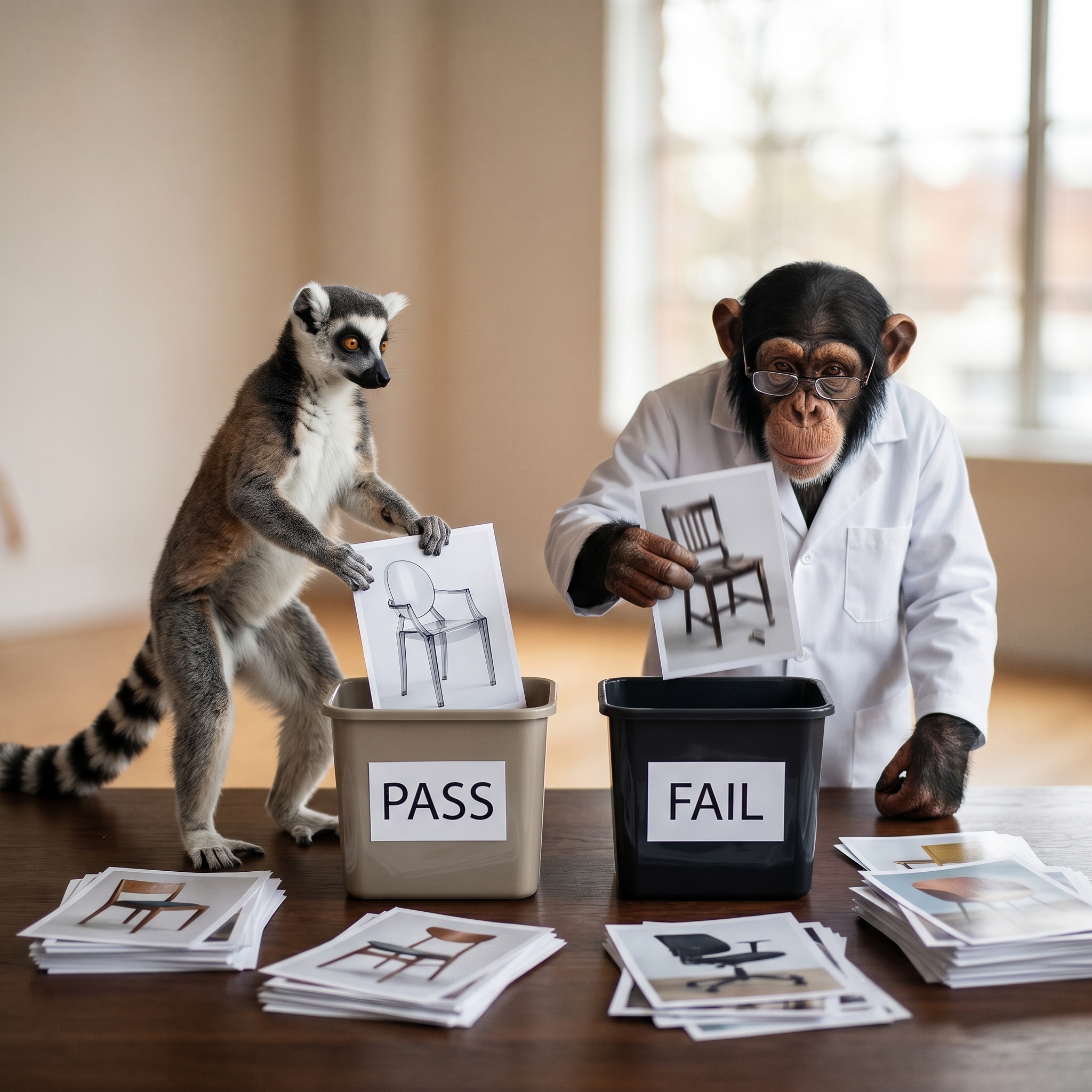

Recent research from Anthropic highlights the necessity of “constitutional” checks and automated evaluations in production AI systems to prevent toxic or off-brand outputs. In a pipeline architecture, this concept is implemented through Quality Gates.

Instead of blindly returning an AI-generated asset to a user, the pipeline dynamically scores the output against plain-English criteria. If a marketplace seller uses your SaaS tool to generate an avatar or a lifestyle image, a secondary, lightweight vision model can evaluate the result: Is the product clearly visible? Are there artifacts or warped text? Does it violate platform safety guidelines?

If the generated image fails the Quality Gate, the pipeline can automatically loop back, tweak the prompt, and retry the generation—or flag it for human review—all before the end-user ever sees a result. This automated evaluation ensures that the features you ship are safe, reliable, and enterprise-ready, significantly reducing the support burden on your internal teams.

Accelerating Time-to-Market with Visual Prototyping

Time-to-market is the ultimate competitive advantage in the current software cycle. If it takes your engineering team three months to research, integrate, test, and deploy a new AI-driven product feature, you are already behind. According to Forrester, companies that adopt “AI-first” architectural patterns ship customer-facing features significantly faster than those trying to bolt AI onto legacy systems.

Traditionally, the bottleneck has been the translation between product management and engineering. A product manager might test prompts in a consumer web interface, but turning those tests into robust, error-handled production code requires weeks of engineering cycles.

This is where visual pipeline builders completely change the development lifecycle. Product teams can drag and drop nodes—connecting OCR to background removal, and routing the output to an upscaler—testing their logic in real-time. Once the pipeline produces the desired output consistently, it is instantly available as a secure REST endpoint. The prototype is the production code. By using tools like the apiai.me Visual Prototyping environment, technical founders and product managers can bypass the friction of custom integration, enabling them to launch sophisticated features like automated avatars, content moderation flows, and intelligent document processing in days rather than quarters.

Margin Protection and Predictable AI Economics

Ultimately, a SaaS business is valued on its gross margins and predictable revenue. Integrating powerful AI features introduces variable costs that can easily spiral out of control if not carefully managed. A sudden spike in user engagement with a video generation feature can result in an astronomical cloud bill at the end of the month, turning a profitable customer into a loss leader.

To defend unit economics, SaaS platforms must implement cost-aware routing and strict prepaid usage models. When utilizing a unified API platform, teams gain centralized visibility into their exact per-call costs across every tool and model. This allows for intelligent fallbacks: if an enterprise user is on a premium tier, route their requests to the most advanced, high-cost model. If a user is on a freemium tier, seamlessly route their requests to a faster, highly optimized, lower-cost model.

By managing all AI interactions through a single billing interface—rather than juggling invoices from a dozen different model providers—SaaS operators can accurately calculate their Cost of Goods Sold (COGS). This predictability is essential for pricing new AI tiers, structuring usage limits, and ensuring that the addition of intelligence into the platform is accretive, rather than destructive, to the company’s valuation.

Takeaways: The Playbook for AI-Native SaaS

For technical founders, agency CTOs, and platform engineers tasked with embedding AI into their products, the strategic roadmap should focus heavily on orchestration over infrastructure. The goal is to deliver magic to the end-user without maintaining the machinery that produces it.

- Refuse Infrastructure Debt: Do not build custom GPU clusters or manage complex asynchronous queues internally. Rely on managed execution and unified endpoints to handle the heavy lifting.

- Embrace Unified Schemas: Protect your platform from vendor lock-in and model obsolescence by integrating through a single API surface, allowing you to instantly swap to the latest frontier models.

- Build Pipelines, Not Prompts: Real business value requires multi-step operations. Chain tools like background removal, generation, and upscaling together in server-side pipelines.

- Automate Quality Control: Implement Quality Gates and auto-evaluations to score outputs before they reach the user, ensuring brand safety and reducing hallucination risks.

- Protect Your Margins: Utilize cost-aware routing and centralized billing to maintain the high gross margins that make the SaaS business model so lucrative.

By treating complex intelligence as a fully managed, API-first utility, SaaS platforms can rapidly evolve from simple workflow tools into autonomous, high-value agents, capturing market share without being crushed by the weight of their own infrastructure.