SaaS companies are racing to embed generative AI, but the biggest bottleneck isn’t model access—it’s quality control. To deploy AI image and video features without bleeding users, engineering teams must replace manual “vibe checks” with automated, programmatic evaluations at runtime. If your platform generates an AI avatar with six fingers or a product mockup with a mangled logo, the magic instantly becomes a liability. The solution lies in shifting from open-ended generation to closed-loop pipelines governed by strict, automated evaluation gates.

The “Vibe Check” Cannot Survive Contact with Production

When engineering teams prototype new AI features—whether it’s an interior design visualizer, an automated ad-creative generator, or an AI avatar generator—they typically rely on visual inspection. The developer runs a few dozen prompts, spots a few weird artifacts, tweaks the system prompt, and declares the feature ready for deployment. This informal “vibe check” is the enemy of scalable SaaS architecture.

Generative AI models are inherently non-deterministic. A prompt that yields a stunning, photorealistic product shot 95 percent of the time will inevitably hallucinate a melted background or an anatomically impossible human hand the other 5 percent of the time. When you are serving thousands of API requests per hour to paying customers, a 5 percent failure rate is a catastrophic churn event.

According to a thesis by Sequoia Capital on the generative AI market, the industry has officially moved past the “first act” of pure technological novelty; the current “second act” demands a rigorous focus on customer retention, which is impossible to maintain if product outputs are unreliable. Relying on end-users to act as your QA department by handing them a “regenerate” button is a poor user experience. SaaS platforms must treat visual outputs as code: it requires testing, validation, and automated assertion before it is ever rendered on the client side.

The High Cost of Ungated AI Generation

For a SaaS business, the cost of a failed AI generation extends far beyond the fraction of a cent spent on the API call. It erodes user trust and severely damages brand equity. Imagine an e-commerce merchant using a recommerce platform’s AI tool to remove a background and place a vintage jacket onto a generated model. If the generated image features grotesque proportions, the merchant doesn’t just discard the image—they question the value of the entire software subscription.

Enterprise buyers are highly sensitive to these risks. Research from Gartner indicates that organizations that actively operationalize AI Trust, Risk, and Security Management (AI TRiSM) will see their AI models achieve a 50 percent improvement in terms of adoption, business goals, and user acceptance. Ungated AI is quite simply a commercial liability.

Historically, the defense against bad content was human moderation. In the fast-moving world of digital ad-tech, agencies would manually review hundreds of creative variants before pushing them to campaigns. But human moderation operates at the speed of minutes or hours, completely breaking the synchronous loop expected in modern SaaS applications. When users click “Generate Marketing Assets,” they expect high-quality results in seconds. The only way to bridge the gap between non-deterministic models and the demand for deterministic SaaS reliability is through automated, machine-speed evaluations.

Enter the “Vision-Model-as-a-Judge” Era

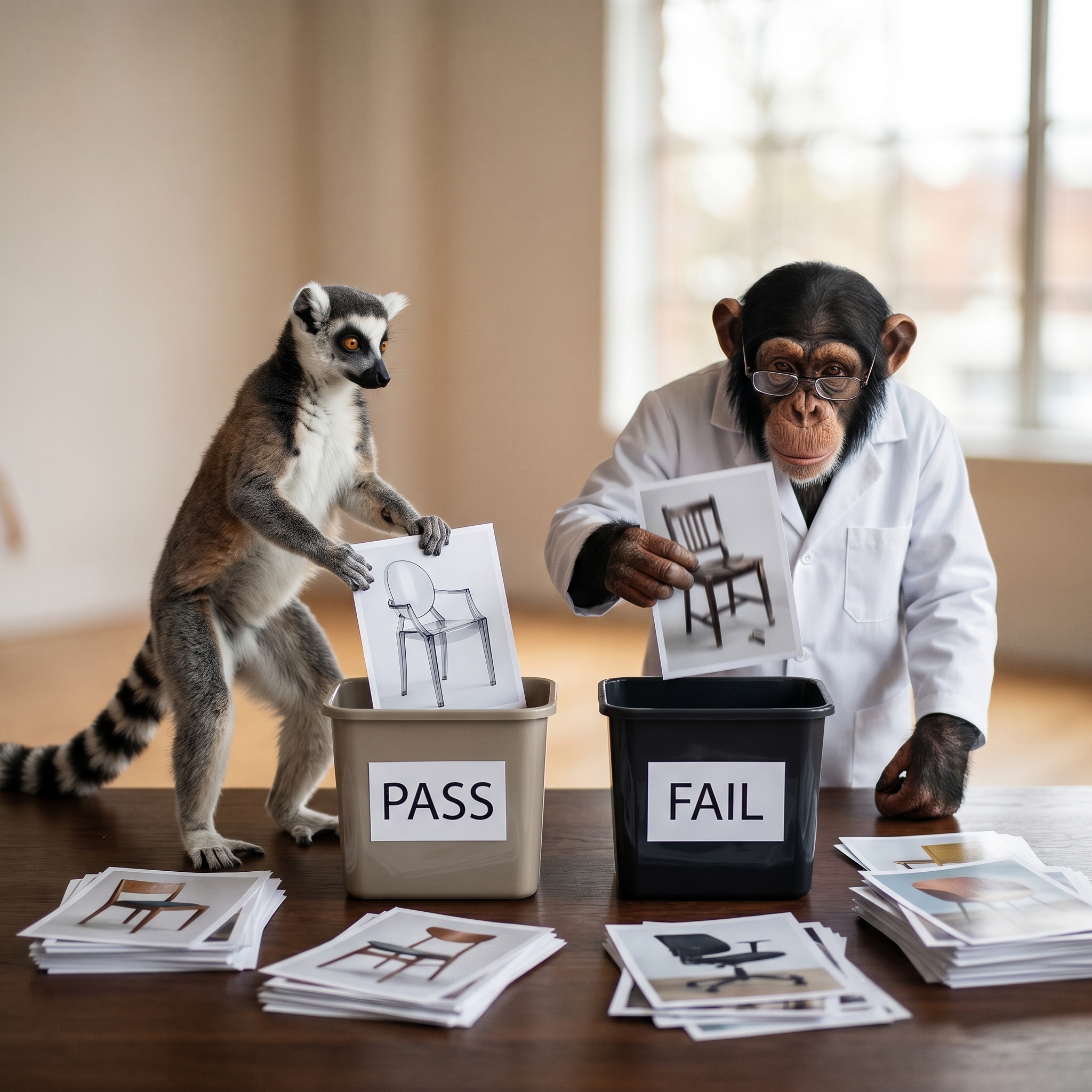

The text generation ecosystem solved this problem through “LLM-as-a-Judge” paradigms, where a smaller, highly tuned language model evaluates the output of a primary generative model against a rubric. Now, this exact same architecture is becoming mandatory for visual generation pipelines through the use of Vision-Language Models (VLMs).

Instead of relying solely on crude, pixel-level distance metrics or legacy CLIP scores—which fail to capture semantic errors like a car having five wheels—modern engineering teams are deploying multimodal models to grade generated imagery. According to recent preprint studies published on arXiv regarding text-to-image faithfulness evaluation, advanced VLMs are now capable of answering highly specific visual questions about generated images with accuracy that closely matches expert human annotators.

This means platforms can dynamically ask a secondary model: “Does this image contain distorted text?”, “Is the human figure anatomically proportionate?”, or “Does the lighting on the foreground object match the background?” If the evaluating model detects an anomaly, the system traps the error before the user is exposed to it.

Building Quality Gates into Automated Pipelines

Implementing automated evaluations requires a fundamental shift in how developers interact with generative AI. Instead of making synchronous, single-shot API calls directly to a generation endpoint, engineering teams must build multi-step pipelines.

A modern AI architecture treats generation as just the first node in a broader workflow. Once the image or video is synthesized, the payload is immediately passed to a Quality Gate node. This gate executes the visual evaluation against the defined business logic. If the evaluation returns a passing score, the asset is delivered to the user. If it fails, the pipeline automatically triggers a retry loop with adjusted parameters, or it routes the asset to an asynchronous human review queue.

Platforms like apiai.me streamline this exact architectural pattern. By chaining generation endpoints with native Quality Gate nodes, teams can enforce automated moderation and quality control without wiring together disparate microservices. The apiai.me Auto-Eval system allows developers to score every pipeline run against plain-English criteria—outputting deterministic pass, review, or fail states that SaaS applications can safely ingest. This encapsulates the complexity of the retry loop entirely on the server side, keeping client logic clean and user experiences frictionless.

Defining Plain-English Evaluation Rubrics

The power of VLM-based evaluations lies in their accessibility. Developers do not need to train custom classifiers or write complex heuristic algorithms to detect bad outputs. Because these evaluators understand natural language, teams can codify their specific business logic and brand guidelines directly into plain-English rubrics.

According to McKinsey, clearly defining operational guardrails actually accelerates AI deployment, as it removes the organizational fear of shipping unpredictable features. When drafting evaluation criteria for visual SaaS products, teams should construct rubrics around several distinct vectors:

- Anatomical Coherence: Asserting that generated human subjects possess the correct number of limbs, fingers, and standard facial proportions without warping or uncanny-valley artifacts.

- Prompt Adherence: Verifying that the core subject requested by the user is actually present and serves as the focal point of the image, rather than being relegated to the background.

- Brand Safety and Moderation: Ensuring the absence of explicit content, copyrighted logos, competitor watermarks, or culturally sensitive imagery that could violate platform terms of service.

- Physical Realism: Checking for impossible physics, such as objects floating without support, inconsistent shadow casting, or mismatched lighting between a product and an inserted background.

- Textual Integrity: Confirming that any text intentionally rendered in the image is spelled correctly and legible, while verifying that no random, hallucinated text appears in the background.

By codifying these rules into evaluation prompts, platform engineers transform vague aesthetic guidelines into strict, executable software assertions.

Continuous Telemetry and Model Routing

Automated evaluations do more than just block bad generations from reaching the end user; they provide critical telemetry for your AI infrastructure. In a rapidly evolving ecosystem, new foundation models for image and video generation are released monthly. Evaluating which model to adopt is often an imprecise guessing game based on marketing claims.

When you attach an automated evaluation score to every generation in your platform, you instantly build a proprietary dataset of model performance. You can objectively track whether switching from one foundational model to another reduces your anatomical failure rate from 4 percent to 1 percent. As highlighted by MIT Technology Review, the industry is aggressively moving toward quantifiable AI metrics to cut through vendor hype, demanding verifiable proof of reliability over flashy benchmark scores.

Furthermore, this telemetry enables dynamic model routing. If your evaluation metrics show that a faster, cheaper model struggles with dense architectural prompts but excels at abstract backgrounds, your pipeline can intelligently route requests based on the user’s input context. You optimize for cost and speed where appropriate, and default to heavier, more expensive models only when the evaluation failure rates dictate the necessity.

What to Watch

The transition from manual inspection to automated evaluation is the dividing line between experimental AI prototypes and robust, enterprise-grade software.

- Pipelines over Endpoints: Expect SaaS engineering teams to entirely abandon direct, single-shot model calls in favor of orchestrated pipelines that embed evaluation and retry logic by default.

- Evaluation as a Core Metric: Platform engineers will increasingly monitor “Eval Pass Rate” on their operational dashboards right alongside traditional SaaS metrics like uptime, database load, and API latency.

- Domain-Specific Rubrics: As vision-language models become faster and cheaper to query, expect companies to develop highly specialized, proprietary evaluation prompts tuned specifically for their vertical—such as an e-commerce platform that strictly measures product lighting consistency.

- Zero-Downtime Model Swaps: With strict automated rubrics in place, teams will be able to hot-swap underlying generation models in production without fear, trusting their evaluation gates to catch regressions instantly.