Media production has hit a bottleneck not of original creation, but of high-volume adaptation. Universal image segmentation models have collapsed the boundaries between semantic, instance, and panoptic visual parsing, giving engineering teams the ability to programmatically dismantle and reassemble images at scale. For modern publishers and agencies, this represents a profound structural shift. Instead of treating photographs as flat, static grids of pixels, automated editorial pipelines can now interact with visual media as dynamic scenes composed of distinct, addressable objects. By unifying previously fragmented computer vision tasks into single architectures, media companies are now automating smart cropping, contextual background replacement, and surgical content moderation without scaling up expensive manual retouching teams.

The End of Fragmented Computer Vision

Historically, teaching a machine to understand the contents of an image required a brittle, highly specialized patchwork of different models. If an engineering team needed to identify broad regions like “sky” or “street,” they deployed a semantic segmentation model. If they needed to count specific people in a crowd, they spun up a separate instance segmentation model. Combining these insights into a complete mapping of the image—known as panoptic segmentation—required complex heuristics, massive compute overhead, and constant pipeline maintenance.

The introduction of universal architectures, championed by models like Mask2Former and OneFormer, fundamentally altered this landscape. By reframing all image segmentation tasks as a single mask classification problem, these architectures eliminated the need for specialized, parallel pipelines. A single model could now process an image and dynamically output semantic labels, instance boundaries, or complete panoptic maps based on the specific query it received.

This consolidation is not just an academic curiosity; it is a major efficiency driver for production environments. As noted in the original Mask2Former research presented at the CVPR conference, unified transformer architectures consistently outperform specialized legacy models across all three segmentation tasks while utilizing the same underlying parameter count. For platform engineers, this means fewer models to host, lower latency per inference, and a dramatic reduction in the orchestration complexity required to parse complex editorial imagery.

Why Multi-Platform Media Demands Semantic Precision

The demands of modern content distribution have pushed editorial pipelines to the breaking point. A single hero photograph purchased from a wire service must now be dynamically adapted into a 16:9 banner for desktop web, a 9:16 vertical crop for TikTok and Instagram Reels, and a 4:5 square for newsfeed distribution. Relying on naive “smart crop” algorithms—which typically hunt for the highest contrast pixels and center the crop around them—frequently results in unusable assets, cutting off a speaker’s hands or cropping out crucial context in a news photo.

According to analysis by Digiday, the shift toward multi-platform distribution has forced digital publishers to produce up to five times as many asset variations per story compared to just three years ago. Manual cropping at this scale is economically unviable, making algorithmic adaptation a mandatory capability for modern media infrastructure.

Universal segmentation solves the aspect ratio crisis by introducing subject-aware reframing. Because models like OneFormer assign precise instance IDs to specific subjects, an automated pipeline can be instructed to lock onto “person 1” and “person 2” while discarding the irrelevant background space. The pipeline can dynamically calculate the exact bounding box of the segmented subjects and apply mathematical padding to generate perfect crops for any platform format, ensuring the editorial focal point is never compromised.

Surgical Moderation and Automated Compliance

Content moderation in high-volume publishing is fraught with nuance. In the context of news reporting, documentary filmmaking, or user-generated marketplace listings, an image may contain a sensitive, copyrighted, or inappropriate element in the deep background. Rejecting the entire image disrupts the editorial flow, but manually reviewing and blurring elements creates massive operational bottlenecks.

You cannot moderate what your systems cannot understand. Universal segmentation enables a shift from binary “pass/fail” moderation to surgical intervention. By mapping every object in a scene, a pipeline can isolate a specific branded logo on a t-shirt or an inappropriate gesture in a crowd without touching the primary subject.

Research from Gartner projects that by 2026, over 80 percent of enterprises will have integrated AI APIs to automate core operational tasks, with content moderation and compliance standing out as immediate high-ROI targets. This is where orchestrating multiple AI capabilities becomes critical. By integrating these segmentation models into node-based orchestrators like the pipelines built on apiai.me, platform engineers can design workflows where Quality Gates automatically branch logic based on the segmentation output. If a restricted object is segmented, the pipeline can automatically flag the exact pixel coordinates, applying a localized blur or generative fill before the image ever reaches the CMS.

Generative Fill and the Dynamic Layout Revolution

The most transformative application of universal image segmentation in media is its synergy with generative AI. Isolating subjects with pixel-perfect precision is only the first half of the equation; the second is contextually replacing the surrounding environment to fit new editorial or advertising narratives.

Consider an e-commerce platform automatically generating seasonal ad campaigns. Universal segmentation cleanly extracts the product. From there, diffusion models can seamlessly outpaint a new environment—placing a summer sneaker onto a snow-covered street for a winter promotion. Because the segmentation mask provides a flawless alpha channel, the generative model knows exactly where the product ends and the synthesized background begins, preserving the integrity of the original asset.

According to Bain & Company, the strategic deployment of automation and generative AI can reduce creative production costs by up to 30 percent, freeing editorial and design teams to focus on high-level narrative strategy rather than repetitive pixel-pushing. By chaining segmentation tools with generative outpainting endpoints, agencies can generate thousands of highly localized, platform-specific ad variants from a single core asset in minutes.

Building Unified Visual Workflows

Despite the power of universal segmentation models, deploying them from scratch remains a formidable infrastructure challenge. Standing up dedicated GPU instances to run heavy transformer models requires handling cold starts, managing container orchestration, and dealing with significant idle compute costs when editorial traffic spikes subside.

The industry is rapidly shifting away from self-hosted, monolithic model deployments toward API-first ecosystems. For lean engineering teams at media companies, the goal is not to become computer vision researchers, but to reliably orchestrate AI capabilities to solve business problems.

As reported by TechCrunch, the primary bottleneck for technical founders scaling AI applications is no longer model capability, but rather pipeline orchestration—the ability to reliably chain multiple AI inferences without introducing latency and catastrophic failure cascades. Instead of managing infrastructure for OneFormer alongside separate hardware for generative backends, teams can leverage unified endpoints. By querying the apiai.me tools catalog, engineers can sequentially trigger precise background removal, generative outpainting, and final image upscaling through a single, managed API layer.

This approach allows media companies to build pipelines that include Auto-Eval scoring, where the output of a segmentation and generative fill task is automatically graded against plain-English criteria (e.g., “Ensure the subject is fully visible and the background lighting matches”). If the image passes, it routes directly to publishing; if it falls into a grey area, it routes to a human reviewer.

Takeaways for Media Engineering Teams

As you evaluate how to integrate advanced computer vision into your editorial and ad-ops pipelines, focus on orchestration rather than raw model hosting:

- Move past rigid bounding boxes: Audit your current automated cropping and thumbnail generation tools. If they rely on basic contrast heuristics, upgrading to API-driven panoptic segmentation will drastically reduce layout errors and manual corrections.

- Implement surgical moderation: Transition your compliance pipelines from full-image rejection to localized intervention. Use segmentation masks to automatically blur or replace specific non-compliant elements without losing the underlying asset.

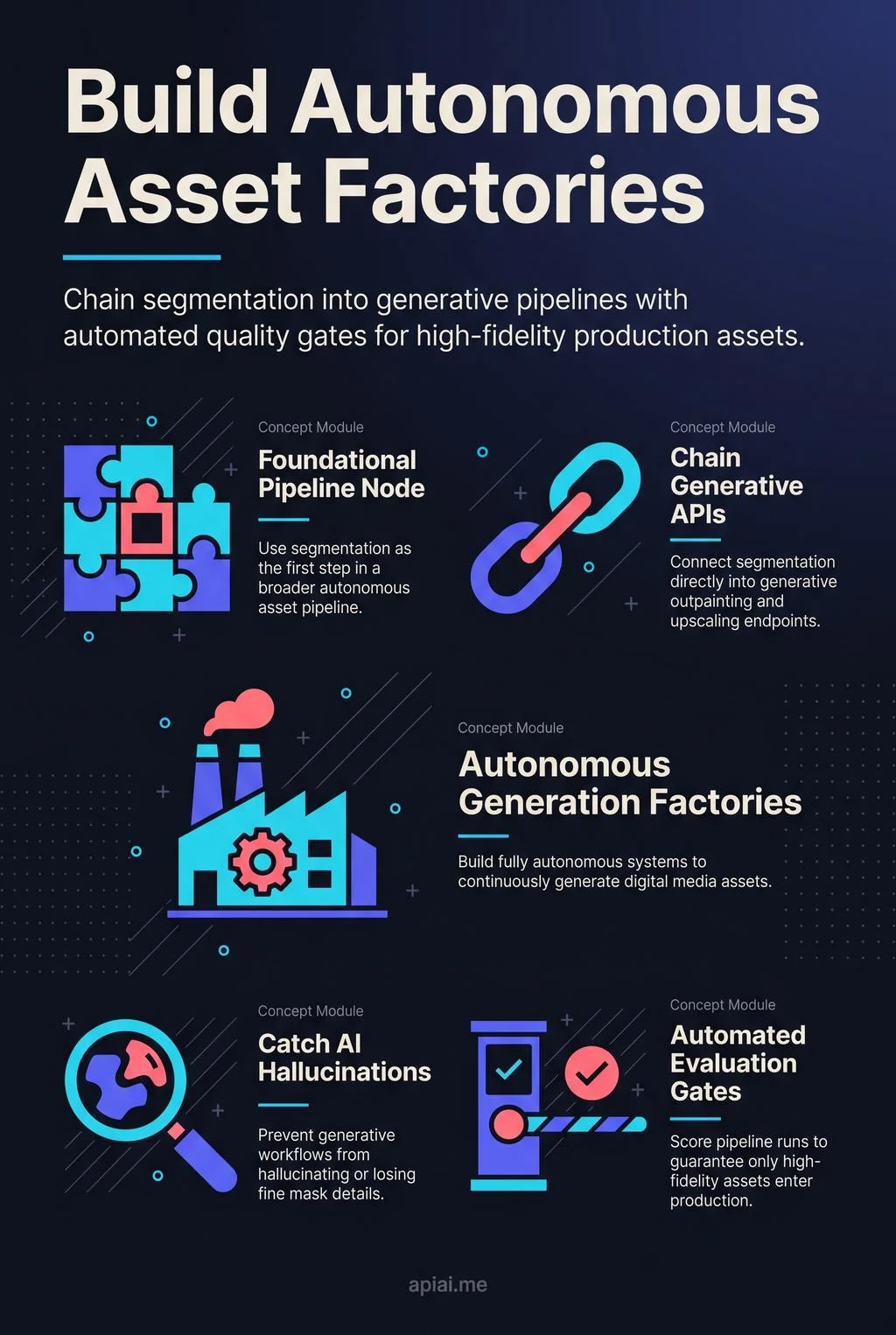

- Chain your workflows: Image segmentation is rarely the final step. Treat it as the foundational node in a broader pipeline. Chain segmentation APIs directly into generative outpainting and upscaling endpoints to build fully autonomous asset generation factories.

- Automate the quality check: Generative pipelines can occasionally hallucinate or fail to preserve fine mask details. Ensure your workflows incorporate automated evaluation gates to score pipeline runs, guaranteeing that only high-fidelity assets make it to your production environments.